Underestimating Volatility in the Cyber Insurance Market

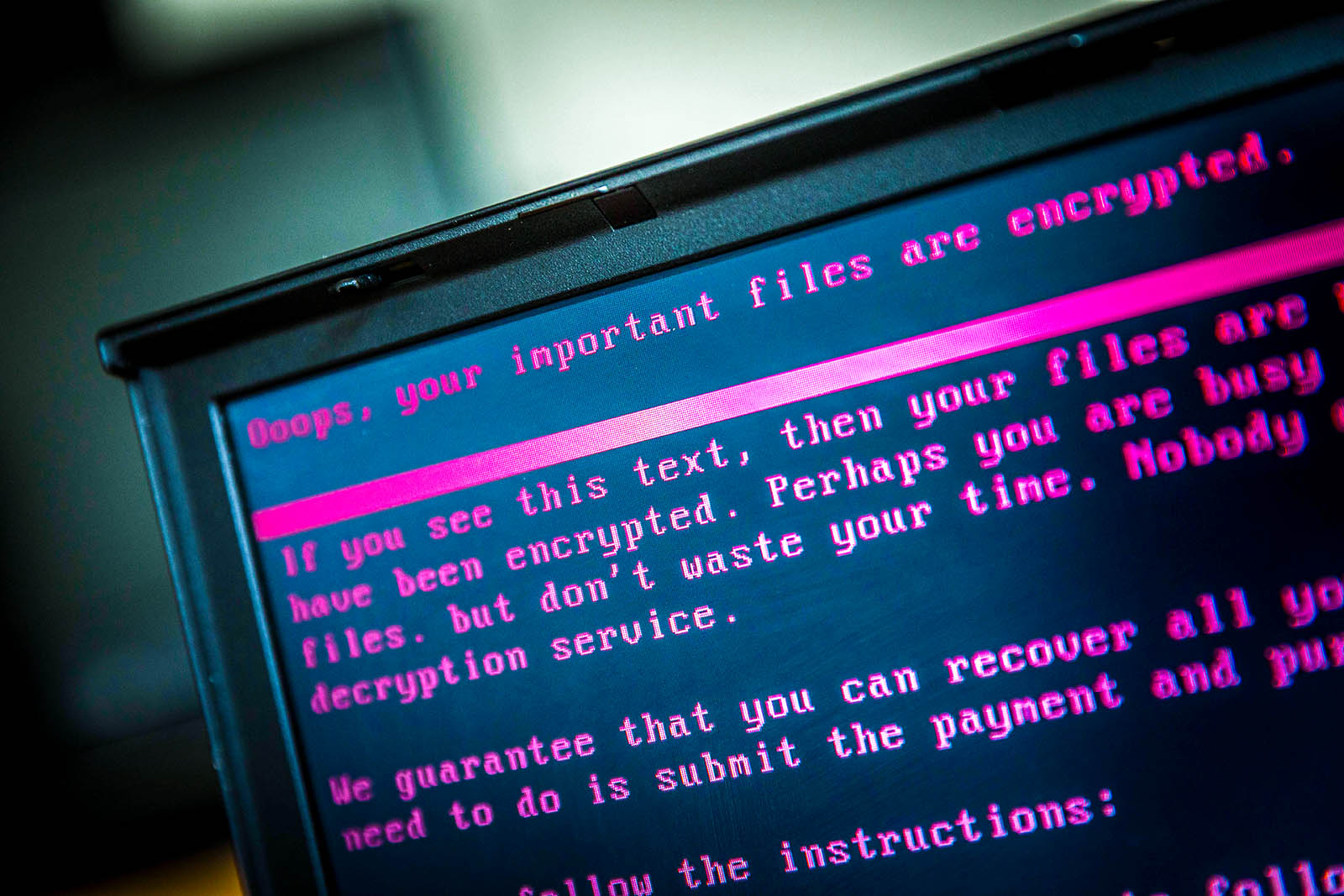

A laptop displays a message after being infected by ransomware as part of a worldwide cyberattack. For most insurance firms, models for volatility are far from robust due to limited clarity on cyber risks.

Photo: Rob Engelaar/AFP/Getty Images

Cyber insurance is the fastest-growing line of insurance business in modern history, permeating most traditional lines of business with very attractive profit margins. What started as a cover to protect companies against hacking has now extended to cover business interruption, extortion, financial fraud, legal liability and system failure arising from cyberattacks.

But while cyber insurance teams have enjoyed the benefits of higher premiums and resulting profits, the broader market systematically underestimates the volatility of the underlying loss distribution.

Discounting Forward-Looking Variables

Traditional approaches toward volatility quantification include the collection and analysis of loss information for decades in a relatively stationary world. For most firms, however, the model for volatility is far from robust due to limited clarity on modeled and non-modeled cyber risks. The volatility estimates are generally predicated upon knowledge derived from the space of known threat actors and known attack vectors along with historical near-misses and actual events. A perspective of this nature suffers from recency bias and has a tendency to provide a false sense of comfort to decision-makers.

Forward-looking variables have played a relatively limited role in business decisions around pricing, capital allocation and reinsurance risk transfer. What is generally excluded is the space of technology developments, unknown threat vectors, and emerging threat actor groups that render existing preventative measures obsolete. The implied volatility in the losses arising from cyberattacks is best estimated through a strong fundamental understanding of developments in technology and relevant emerging threats.

Some examples have been provided below:

Scaled ransomware campaigns

Ransomware has been successfully used since 2005 for the purposes of relatively small-scale financial gain by threat actor groups. Historically, the scale of a ransomware campaign has been quite minimal, and it is only in the last year that we observed patterns that demonstrated what an untargeted and indiscriminate ransomware campaign could lead to. While most models had considered ransomware as an attack vector, the scale was massively underestimated. The increase in volatility stemming from the dimension of scale within an existing attack pattern like ransomware was not considered in pricing cyber insurance policies.

Quantum computing

Another example of a forward-looking technology trend that is not commonplace today is quantum computing.

Let’s observe the link between quantum computing and cryptography. The core principle in cryptography is that large prime numbers are relatively easy to generate and multiply. However, factoring a large number generated using this method into two primes is hard using existing computing capabilities. If quantum computing capabilities at scale become available to nation-state threat actors, systems that are built and secured using current encryption standards will be vulnerable. Encryption methods that cannot be cracked using quantum computing are lagging in their development in relation to the proliferation of quantum computing capabilities. This poses an existential risk to current encryption standards.

Adjusting volatility estimates in cyber insurance to account for forward-looking uncertainties is a necessity.

Internet of Things

When day-to-day appliances in a connected world have physical locks being replaced by digital locks and examples of weaponization of household appliances emerge, the exposure profile of insured entities changes quite dramatically. If homeowners’ policies extended coverage to include cyber risk, existing pricing and reserving guidelines would not hold. There is evidence in the market that homeowners’ policies provide the option of electing to insure cyber risk, but it is unclear whether such systemic exposures are considered for pricing decisions.

Massive Underreporting of Breach Incidents

Insurance products that have offered coverage against data privacy, availability and integrity have seen lower loss ratios and combined ratios when compared to other well-established lines of business across most market participants. One of the reasons for this is that a substantial majority of breached companies decide not to disclose publicly that they have been breached owing to reputational damage, legal liability and increased scrutiny from their customers and the public. An exception to this is when there is a disclosure requirement by law. The true frequency of breaches and the associated volatility has therefore been underestimated in most models that are used today.

This observation has led many insurers to offer cyber coverage at a substantial discount to existing policyholders from other lines of business with the goal of gaining market share. Because of these reasons, pricing levels are determined almost entirely by competitive dynamics as opposed to technical risk associated with the policies.

Lack of Losses from Large-Scale Cyber Accumulation Events

There have been several near-misses from an accumulation perspective in the last five years in the cyber insurance market. The absence of a large-scale industry-wide loss until 2017 resulted in the underestimation of volatility with the expectation that security defenses and business continuity plans of companies were equipped to handle business interruption resulting from a cyberattack.

This hypothesis has now been turned around completely. Months of downtime have been observed by companies that were impacted by NotPetya, the event that showcased significant accumulation impact with claims being made against property policies. This outcome was unexpected and was not priced in to the policies that covered this risk, accidentally or otherwise. Such silent exposures exist for many insurers, leading to increased volatility in the risk profile of the carriers running large-scale P&C businesses.

Introduction of Appropriate Cyber Catastrophe Loads

We have pockets of emerging intelligence about how pricing and accumulation management needs are impacted as a result of cyber risk embedded within all lines of business.

Insurers have made a move toward determining appropriate cyber catastrophe loads in rating plans. When determining catastrophe loads, not all insured risks are alike, and the ability to understand relativities across these risks is paramount to pricing decisions. A large financial services company with hundreds of vendors, hundreds of millions in revenue and thousands of employees has a different risk profile when compared to a small business in professional services with low revenue, few employees and few dependencies.

There is directional consensus in the market that adjusting the volatility estimates to account for forward-looking uncertainties is a necessity. Insurers and reinsurers are using models and technology partnerships to broaden their horizon around this complex risk.