How Trustworthy Is Big Data?

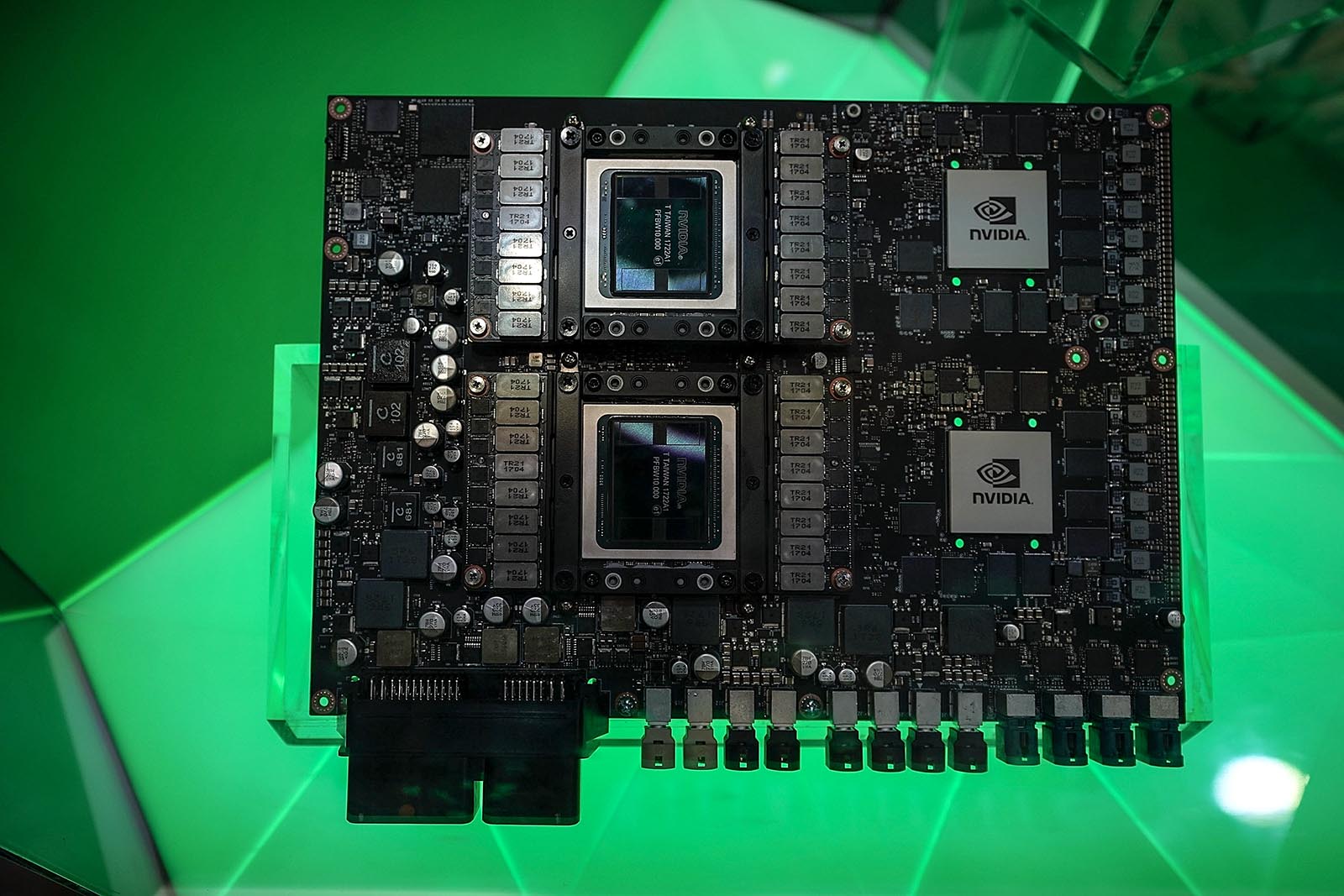

Nvidia Drive Pegasus, the world's first AI supercomputer for level 5 robotaxis, on display during CES 2018 at the Las Vegas Convention Center, January 2018.

Photo: Alex Wong/Getty Images

Over the last decade, big data has gone from being used only in niche applications to becoming an increasingly mainstream part of modern business. But compared to traditional IT architectures, there’s typically a lot less control and governance built into big data systems.

Most organizations base their business decision-making on some form of data warehouse that contains carefully curated data. But big data systems typically involve raw, unprocessed data in some form of a data lake, where different data reduction, scrubbing, and processing techniques are then applied as needed. This so-called “schema-on-read” approach contrasts with the traditional “schema-on-write” approach of data warehousing. It brings some big benefits, notably greater flexibility and more scalable processing than traditional approaches. But there are also disadvantages, such as little or no initial controls over the quality of the information stored in the data lake.

So can businesses actually trust the data coming from these new systems?

There are many aspects to the question, but here are some of the key practical dimensions that organizations should consider when embarking on big data projects:

Data completeness and accuracy. For many big data sources, the problems start as soon as the data is collected, because the recorded values may be approximations rather than exact real values—uncertainty and imprecision might be an inherent part of the process. Consider an organization that wants to improve its marketing spend by analyzing customer sentiment derived from social media. However, language is full of nuance, making it difficult to accurately determine the underlying sentiment of a tweet or blog post. Research has shown that people even interpret the same emoji in completely different ways.

Internet of Things data may also only be approximate. The use of cheap sensors with “good enough” accuracy that only provide information at regular intervals runs the risk of missing fleeting events. And with large volumes of sensors, it’s probable that some may not be working at any given moment, or data may be lost in transmission, requiring interpretation of missing values.

Data credibility. Big data often comes from systems not directly controlled by an organization and may contain inherent biases or outright false values (for example because of bots in social media). And the schema-on-read approach means that you might not be able to ascertain the credibility of the data until after you’ve tried to use it for analysis.

Data consistency. Traditional database systems use sophisticated methods to ensure that users get consistent answers to queries, even as new data is being added. To achieve higher processing capabilities, some big data systems relax these constraints, using eventually consistent systems, where two people may get different answers to simultaneous questions, at least in the short term.

Data processing and algorithms. Big data is typically captured in low-level detail and the extraction of useable information may require extensive processing, interpretation, and the use of data science algorithms. There’s a greater potential for bias and incorrect conclusions than with traditional systems. For example, self-driving cars rely on algorithms and image recognition to read traffic signs, and researchers have shown that small cosmetic changes can induce them to read the signs incorrectly.

Big data is necessarily at best a fuzzy source of truth—but that does not mean that it cannot be used to make important business decisions.

Data validity. No matter how accurate the underlying data, judgment is still required to determine whether it is an appropriate and useful source of information for a specific business question. The value of social sentiment data may not be useful representation of customer satisfaction for a segment that never uses social media.

For all these reasons, big data is necessarily at best a fuzzy source of truth—but that does not mean that it cannot be used for important business decisions. For example, a sharp decline in customer sentiment for a particular product in social media may be a useful leading indicator of poor financial results in the future.

So what can organizations do to strengthen trust and to make sure that big data is used appropriately?

Define the ROI for big data quality. Many organizations suspect they suffer from poor data quality but have no sensible basis for determining whether the problems warrant any investment in improvements. Organizations should identify areas where big data could provide business value, estimate what the impact of poor quality information would be, ascertain the data’s current reliability, and determine how much investment might be required.

Robust governance. Even more than with traditional IT architectures, big data requires systems for determining and maintaining data ownership, data definitions, and data flows. New data orchestration systems track the full information lifecycle of big data and facilitate transfers to more traditional systems in ways that meet the governance and security needs of the enterprise.

Transparency. The appropriateness of any big data source for decision-making should be made clear to business users. Any known limitations of the data accuracy, sources, and bias should be readily available. Some organizations use named information quality standards for different data sources, such as gold, silver, and bronze, with corresponding levels of support and guarantees.

Training. Big data analysis is most appropriate for data scientists with deep expertise in the opportunities and limitations of the data and techniques involved. But organizations must ensure that less-skilled business users also understand the consequences of using big data for decisions in their roles.

Ethics. Big data and the Internet of Things offer unprecedented opportunities to monitor business processes that were previously invisible. But as the infamous example of Target figuring out a teen girl was pregnant before her father did shows, it also risks being intrusive to customers, employees, and society as a whole. Just because something is now feasible doesn’t mean that it’s a good idea. If your customers would find it creepy to discover just how much you know about their activities, it’s probably a good indication that you shouldn’t be doing it.

Organizations may want to introduce explicit consideration of ethics into new project decisions as part of their governance processes. And finally, you may want to collaborate with organizations such as the Partnership on AI, which was created to study and formulate best practices on AI technologies and serve as an open platform for discussion and engagement about AI and its influences on people and society.

Big data is a key part of digital transformation and the business models of the future. Organizations that have robust systems in place and that ensure they can be trusted will be better positioned to take advantage of these powerful new technologies.