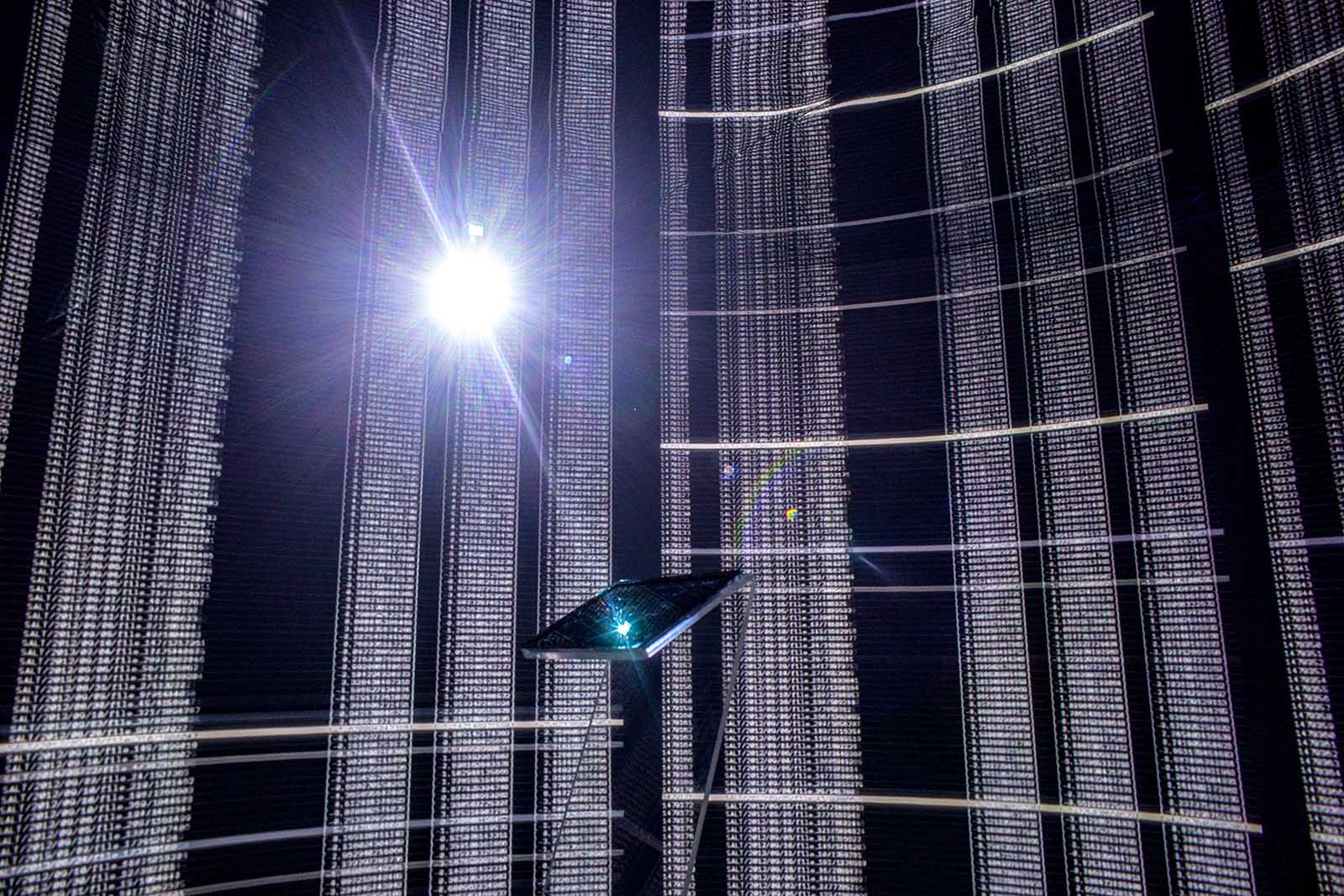

A tablet on display in a high tech art installation in Turkey. As artificial becomes more integrated into life and work, questions surrounding the ethical use of AI will have to be answered.

Photo: Chris McGrath/Getty Images

This is the final article in a weeklong series about artificial intelligence. The previous installments can be read here, here, here, and here.

As artificial intelligence becomes more widespread across the global business landscape, workplace leaders are grappling with increasingly nuanced questions about its ethical use and application.

Matthew Stepka, a former vice president at Google and current executive-in-residence at the Haas School of Business at the University of California, Berkeley, has studied many of these issues in his role as a leader in the field. For example, how is employee data used externally? In the growing gig economy, do contracted workers have the same rights and expectation of privacy as salaried employees? Who are the vulnerable parties in these scenarios?

Mr. Stepka spoke to BRINK about the nature and use of machine learning, data collection and the broader philosophical and existential questions arising from the developing field of general AI intelligence.

BRINK: Is there a code of ethics emerging around AI in the workplace?

Mr. Stepka: The big question is really about the ethics of using data. What data can be used in a workplace setting, specifically? Employees don’t have a lot of rights, basically. Typically, employers are able to use whatever data that the employees are creating on their systems.

Beyond that, I don’t think there’s been a full understanding that people have yet. I think consumers are waking up to how data is being used more and more. And some legislation has been proposed in California, in particular, as well of course in Europe, on how data can be used.

So data is the singular fuel, the food that fuels AI. I think that’s where most of the ethical restraints should be placed.

BRINK: Are companies adapting different ethical practices to address some of these issues?

Mr. Stepka: I’ll tell you, conversations I’ve heard on this topic start with this statement, which is: “Any data you put on your company’s machine, anything that is related to your work along with that company, [companies] don’t feel too constrained to use that data to protect their interests.”

If you’re getting your Gmail on a work computer, all those kinds of things are encrypted and they don’t have access to that data anyway. They might know you’re accessing Gmail, but they don’t know what you’re doing. They can’t gain access to that data, even through the AI. Anything else, they know you’re badging in, you’re using your company phone, where you’re located.

I think it’s more about putting employees on notice that hey, there is AI on the machine, we’re going to be using it to enforce our policies, our rules for privacy, abuses, etc., to make sure people are being good managers and good employees under our policies.

We should be AI optimists. There’s great potential for value creation to help solve real problems for humanity, for the world.

BRINK: Is there a discussion around what can and should be done with employee data?

Mr. Stepka: The employee-employer relationship is still wide open at the moment. The whole idea about company and employee is changing. There seems to be a big debate about, obviously, Uber drivers and everybody else: Are you an employee? It gets a little bit different if you’re a contracted company working with them—that typically is in the contract you sign with that company, which, in the case of Uber drivers, they don’t call them employees because maybe they are not legally employees.

That becomes an interesting ethical question, where that’s playing out in the court of public opinion. Obviously, you can contract for anything in most cases right now. You can say, “Do you want to work with us? We’re a big company, you’re a small company, or an individual person or contractor.” We can effectively prolong that data, kind of controlling you. They might require you to share this visualization or that mapping, for example.

BRINK: Should regulation play a role in any of this?

Mr. Stepka: I think there are a couple of things that would be good principles. I think a requirement to disclose to your employees what you’re doing at some level is probably a good idea. Employees are consumers, and they kind of are used to a certain expectation of privacy. They probably don’t realize how much they don’t have in this situation.

The second thing is, there probably should be some carve-out to say that, nonetheless, companies don’t have unlimited use of the data. You don’t have unlimited use to this data if it really has nothing to do with your policies for your business, for example.

The third thing might be this idea that in situations where you have a conflict of interest between the employee and the employer, the employer cannot use this data against the employee.

BRINK: Do you think that, as the technology progresses, it will be possible to create ethical AI machines?

Mr. Stepka: Effectively, there are essentially three things that could control AI. The easiest way is access to data, which is what feeds it. So you see what data it can access and not access.

The second part is output. That is to say, whatever data you have, you can’t do certain things. The example I give there is the self-driving car. No matter what the the AI recommends, you can’t go faster than 25 miles an hour. You put some logic on top of it, the output, so it can’t do a certain thing. And that could be like, in the case of the employer or the employee, you might say something like, “No matter what, this data can’t be exposed externally.”

Then the third option, and this is a more complicated one, but frankly, the one that is also more nuanced, is controlling the process and how to actually make it efficient, understanding what the AI is doing. That can only really be done with another bot, creating bots to enforce policy rules like I mentioned. They would monitor systems to make sure none of the AI is violating that.

BRINK: Are you an optimist about this technology or whether you think it has the potential to cause great harm?

Mr. Stepka: I think we should be overall optimists. I think there’s great potential for great value creation to help solve real problems for humanity, for the world. I think overall, lots of great things will come from it.

On the near-term stuff, like we’re talking here, machine learning, applications that are probably five, 10 years from now or today, we should be vigilant. We have to think these things through, and we haven’t figured it out yet. It’s a lot of questions. How we make sure they do the right thing? How much information do you really give them? We have a lot to figure out.